Unpacking the Anthropic/DoW/OpenAI kerfuffle

What's going on with Washington and AI?

Just over a week ago the US government and two of the leading AI companies got into a dispute that could shape the future of AI policy in the United States. No matter how things finally resolve themselves, the result will almost certainly have repercussions for all of us. As one might guess, there’s a lot of misinformation out there, and some of that is being repeated by major media outlets. I’ve spent the better part of the last 10 days reading first-hand sources and piecing together the full story as best I can, which I will share here.

The Timeline

For those that haven’t kept up with the news, here is the sequence of most relevant events:

In 2025 (under the current administration) Anthropic entered into a $200 million contract with the Department of War (DoW) to provide AI services for use with classified documents. There were two “red lines” in that contract: no use of Anthropic models for mass surveillance, and no use of their models for fully autonomous weapons. The DoW wanted to remove those restrictions and allow AI for “all legal uses”.

On Friday, February 27th, the deadline for a contract resolution expired, and President Trump posted on social media that the government will be phasing out use of Anthropic’s services over a six month period.

Less than an hour later, without consulting the President, Secretary of Defense Pete Hegseth declared Anthropic a “supply chain risk,” a potentially devastating classification that has never been applied to a private US company and could have major repercussions across the private sector. Hegseth initially claimed that he would bar any company from working with Anthropic if they had any dealings at all with the DoW. If that went through, it could have been the end of Anthropic.

By the end of that day OpenAI announced they had entered into a contract with the DoW to provide AI services for classified information for all “lawful purposes,” with some clarifying language.

The following Monday (March 2nd) OpenAI said they were working with DoW to make the same two red lines explicit in their agreement.

On Wednesday (March 4th) Anthropic was officially notified by the DoW that they were, in fact, being designated as a supply chain risk, but that designation would not be quite as dire for their future as the original threat was.

A lot has been said to try to understand what happened, and some of the contradictions here. I’ll address those “explanations” presently. Many people view Anthropic here as the “good guys” for sticking to their principles, and OpenAI as the “bad guys” for being so obviously opportunistic. As you’ll soon see the story is not quite so clear, but mistakes were certainly made.

Fully Autonomous Weapons

Anthropic had two red lines, and I think one of them is far less central to the real dispute: the use of AI for fully autonomous weapons. To be clear, Dario Amodei, CEO of Anthropic, has not objected to this use of AI based on ethical grounds. In a recent CBS news interview he made it very clear that there were two reasons why autonomous weapons are a red line for him. First, the models are not reliable enough yet to guarantee that unintended targets will not be hit. Second, having fully autonomous weapons means fewer humans in the kill chain, and that means fewer people using their judgement on the battlefield. Dario has expressed a willingness to work with the government on both these issues so that Anthropic can still develop such systems.

OpenAI’s contract only allows for the use of their “cloud” models, and not models on “edge” devices. A cloud model is one that operates on OpenAI’s servers in some data center. Users access it through a web connection. An “edge” device is one that can act without a connection. Your cell phone calculator still works, even when you’ve lost a connection. That’s a simple example of an edge technology. OpenAI says it is not deploying on edge devices “where there could be a possibility” of autonomous lethal use. Cloud-only deployment makes autonomous lethal use less plausible and more controllable: a fully autonomous, reliable weapon can’t depend on a potentially slow (or non-existent) internet connection.

The Safety Stack

Some have claimed that only one of the two companies have a “safety stack,” which means their model would autonomously refuse requests that went against their red lines. This is false. The existing Anthropic model, Claude.gov, has a safety stack, and the new OpenAI model will have a safety stack. Both models are also overseen by “Forward Deployed Engineers,” which are human employees who are given security clearance and can check that the models are doing (and not doing) what they’re (not) supposed to.

Mass Surveillance

Both companies have allowed for the use of their AI models for mass surveillance of residents of foreign countries. At issue is mass surveillance of US residents. Given the Trump administration’s hostility toward certain groups, it doesn’t take much imagination to think of why this would be of interest to them. Some uses of mass surveillance are clearly against current law. However, a tremendous amount of information about US residents can be purchased legally. This hasn’t been an issue taken up by lawmakers yet because up until now there was no technology capable enough to process that much information and build a coherent picture of each person. AI changes that. Dario Amodei has clearly said he believes this use of AI is wrong, and is firm on this being one of his red lines. Sam Altman apparently agreed to a contract which allows AI to be used for mass surveillance, if that use is based on legally-purchased records. However, by all accounts it appears that Altman did not intend such uses to be allowed either, and said this was a consequence of the contract being negotiated in a very short period of time. New wording of the OpenAI contract was announced last Monday, which explicitly states:

“For the avoidance of doubt, the Department understands this limitation to prohibit deliberate tracking, surveillance, or monitoring of U.S. persons or nationals, including through the procurement or use of commercially acquired personal or identifiable information.”

This will be enforced by incorporation into their model’s safety stack, effectively preventing the DoW from using it for mass surveillance of US residents of any kind.

Mistakes

One thing that’s clear is that there were a lot of mistakes from all parties involved:

Dario Amodei. There is a leaked Anthropic internal memo from Friday, February 27th, just hours after Trump’s announcement that the government would be cutting ties with Anthropic, in which (among other things) Dario Amodei says, “The real reasons that [DoW] and the Trump admin do not like us is that we haven’t donated to Trump … We haven’t given dictator-style praise … ”. True or not, that was clearly a mistake to say if he still hoped to salvage any kind of deal with the government, or at least convince them not to go through with the supply chain risk designation. And indeed, the following Thursday Dario posted a sincere apology.

Sam Altman. Altman has publicly said many mistakes were made that he regrets, including the timing of the new contract so soon after things blew up with Anthropic, not thinking through the terms of that contract carefully enough, etc. Here’s the relevant quote:

“One thing I think I did wrong: we shouldn't have rushed to get this out on Friday. The issues are super complex, and demand clear communication. We were genuinely trying to de-escalate things and avoid a much worse outcome, but I think it just looked opportunistic and sloppy.” —Sam Altman

Pete Hegseth. If the government wants to pull its contract with Anthropic because they can’t reach an agreement, that’s totally normal business practice. If anything, Trump may have actually been trying to cool things off in his declaration that the government will be phasing out Anthropic’s services. What made the whole situation so much worse was the supply chain risk designation, a chilling abuse of power by the US government which would essentially say to every private company, “do things our way or we’ll destroy you.” Apparently Hegseth announced Anthropic would be given this designation in a heated, poorly thought-out tweet, without consultation with his superiors (e.g. Trump). It’s not a great outcome, but at least the final supply chain risk designation didn’t go nearly as far as Hegseth’s original statement had claimed.

Conclusions

It’s very unclear to me (and every AI watcher that I follow) why things blew up between the DoW and Anthropic, when the DoW agreed to essentially the same terms with OpenAI. None of the technical explanations that I’ve read (e.g. one company has a stronger safety stack than the other) seem to properly explain the situation. A lot of people like to vilify OpenAI for a variety of reasons, but from everything I’ve read they were acting in good faith with respect to these particular negotiations.

It may simply come down to good old fashioned politics. OpenAI has a history of willingness to work with the Trump administration, and the president of their board (Greg Brockman) is a well-known Trump donor. Dario Amodei, on the other hand, told people to vote for Kamala. While the cynic in me thinks this may be enough of an explanation for recent events, it still isn’t completely satisfying. If politics were so clearly behind what’s going on, then why was the original DoW contract given to Anthropic, and not OpenAI, in the first place?

The truth is that we may never know. The biggest missing pieces are the two contracts: the one between the DoW and Anthropic, and between the DoW and OpenAI. The key to the puzzle may come down to some particular wordings in those documents, but its unlikely that either will be released.

App of the week

Each week I’m featuring an app that I built with AI assistance. This is simultaneously a demonstration of the current technology’s potential, and its promise. I’m not a software engineer and wouldn’t have been able to build these apps on my own. AI coding assistance has opened up a whole new world of possibilities for me, and it can for you, too. Yes, AI has real problems: energy concerns, privacy concerns, employment concerns, education concerns, etc, etc. These are extremely significant issues. However, those concerns have to be weighed against the benefits of AI, and I don’t see those benefits showcased enough for a truly balanced discussion.

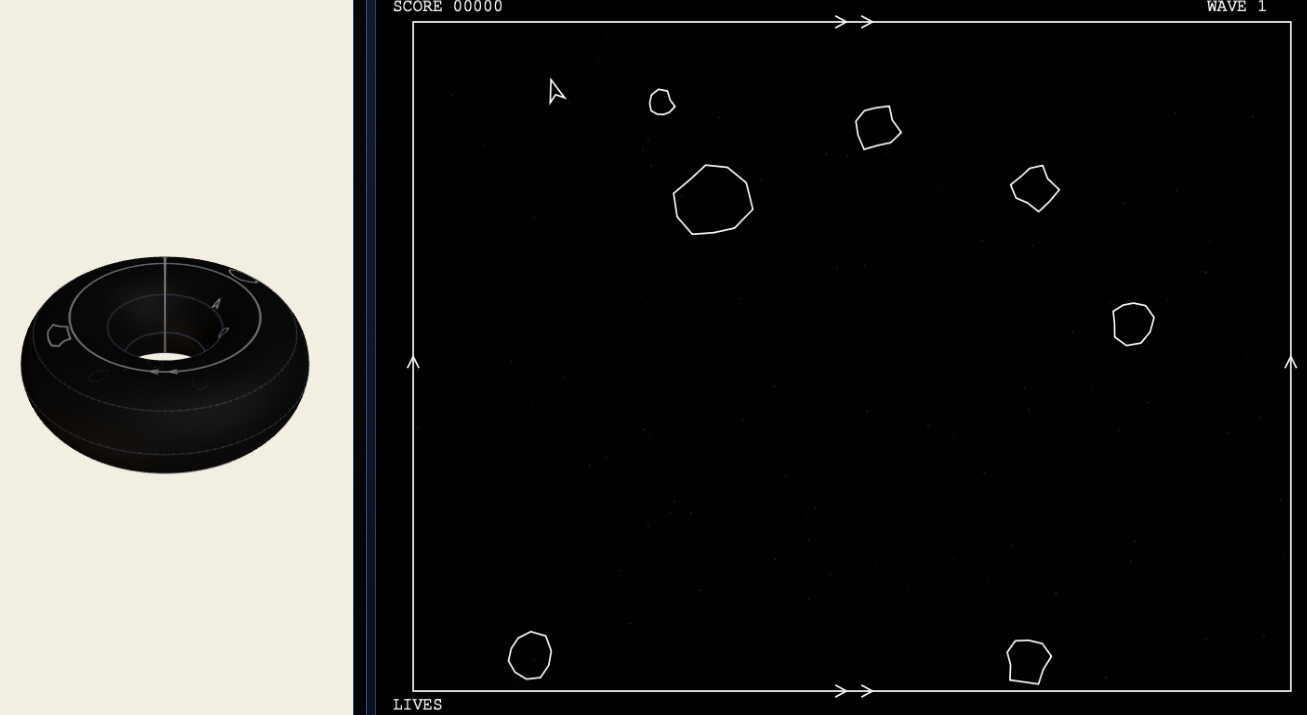

This week’s app is one I worked on for a while: Topology Asteroids! Topologists often use the old video game “Asteroids” as a way to introduce the idea that a rectangle with opposite sides identified is really a torus. This app makes that fact obvious, and at the same time introduces many other topological spaces. The best part is, you don’t need to understand any of what I just said!! You’ll just learn some topology by playing, which is the ideal kind of educational experience. Arrow keys to rotate and thrust, space bar to fire. Just like the good old days. Start with “Toroidal Universe w/ 3D view” for a familiar experience.

David Bachman is a professor of Mathematics, Data Science, and Computer Science. To learn more about David’s work, visit his AI speaking and consulting site, his faculty page, or explore his mathematical art portfolio.

Thanks for this, David! This is the best description I’ve seen about the blowup. I think the cynic in you is right about the true reason for the dispute being a lack of political loyalty. Your question about why the contract was given to Anthropic in the first place is a good one, but I’m guessing the answer is simply that Anthropic had partnered with Palantir and AWS to provide Claude to intelligence and defense agencies during the last administration, so it was initially easier to just continue down that path until the contract dispute.