The AI Problems Nobody Is Solving

My biggest concerns about AI are getting worse, not better

I started this blog with the intention of giving a balanced view of the issues surrounding AI. However, my posts over the last few months have definitely skewed toward the positive. As the capabilities of AI grow, I have felt the need to keep my readers up to date on all they can now do. The things that worry me about AI haven’t changed, so I haven’t felt the same pressing need to write about those concerns. This is a mistake. I think periodic reminders of my worries are warranted, lest I give people the impression that I’m all-in on this new technology. In this post I’ll summarize the top things that actively worry me the most about AI. If you’ve read enough of my previous posts then none of this will be new. The real take-away is that I’m still just as worried about every one of these things. And problems like these don’t just stay benign in the background. The longer they sit and fester, the worse they become.

Energy

While I’ve seen conflicting things about how much water AI uses, there doesn’t seem to be much debate about its increasing energy use. As we build out more and larger data centers to train models of exponentially growing size, I have no doubt at all that the energy use will skyrocket. In the past I’ve written about some of the offsets: newer data center designs are more energy efficient, newer models take less energy each time they respond to a user, etc. However, Jevon’s paradox is real: as models get more efficient they get used more, and the net effect is that the total energy demands go up. I believe in human-caused climate change, and I don’t see how AI won’t exacerbate that problem. Soon I will write an update on some of the solutions model providers are pursuing, like fusion reactors and solar-powered space-based datacenters. While not as science-fiction as these ideas may sound, I worry that by the time they are fully operational it will be too late to save the planet.

Employment

Last week I wrote about what the current statistics have to say about the effect of AI on the labor market. The take-away was that it does not appear that the AI-fueled job apocalypse has arrived just yet. However, there are some notable professions where the disruptions are clearly visible, and many, many more where it seems inevitable. Call center work is significantly down. Freelance artists are losing clients. Taxi and rideshare drivers are being replaced by autonomous cars. Entry-level tech jobs are shrinking. And this could all be just the tip of the iceberg. I’m a believer that AGI is possible, and at the current pace of development I think we’ll see it in the next few years. AGI is the ability of models to do anything a human might do at a computer terminal. That makes it a potential replacement for a very significant chunk of the work force. There’s some hope that in the long term new jobs will appear that are only possible due to AI, but I doubt they’ll get here fast enough. Other solutions, like Universal Basic Income for workers displaced by AI, just seem like pipe dreams.

Education

A determined student could always find ways to cheat, but they had to be proactive about it. They had to go seek out a solution manual that they knew they weren’t supposed to have, or spend money and pay someone else to write papers for them. Now times are different. Many students have embraced AI in their daily lives. They, like millions of people all over the world, use it daily for benign purposes like answering routine questions or brainstorming ideas. They are used to asking AI for help when they have questions about all kinds of stuff, so it’s natural for them to ask it for help when they’re stuck on a school assignment. The barrier to cheating is just so low with AI that it takes all but the most conscientious student to avoid the temptation. And even for those students, there are grey areas. Is it OK for a student to ask AI to look things over after they believe they have completed an assignment? After all, an AI that points out areas where the student’s work is insufficient provides more learning opportunities, not fewer. Unfortunately, that creates a slippery slope that can easily lead to misuse. It also creates inequities in the classroom for students who only have access to less capable models. The bottom line is AI has created a nightmare situation for teachers who are just trying to do their job.

Existential Risk

I’ve written about this a number of times in the past, so I won’t spend too much space on it here. The brief version is that I still don’t worry too much about AI-fueled doomsday scenarios, but I also think it’s foolish to ignore the possibility. If AGI is possible, then ASI (super-human level intelligence) is possible. Once that happens, we better be damn sure that it is in alignment with humanity’s needs and goals. By definition, there will be no outsmarting ASI if we lose control of it.

None of these problems are new. What’s new is the speed at which they’re becoming unavoidable. The longer they stick around, the harder they will be to solve.

With that said, I don’t want to end on a completely pessimistic note. People are certainly trying. Model providers are aware of the problems with run-away energy use, and they are actively seeking out solutions. Employment concerns are real, but students are adapting by picking majors in fields that are less susceptible to AI disruption. Teachers have come up with some very creative ways to adapt their classrooms, and in some cases even leveraging AI to create positive learning experiences. And several prominent tech voices are sounding the alarm bells about existential risk, which will (hopefully) keep big tech accountable.

This is what it’s going to take to solve these problems: concerted, creative, and sustained efforts. There’s no shortage of people willing to point out what’s wrong with AI. What’s much harder (and much more important) is actually working toward solutions. If we want the benefits of this technology, we have to be willing to engage with its risks.

App of the Week

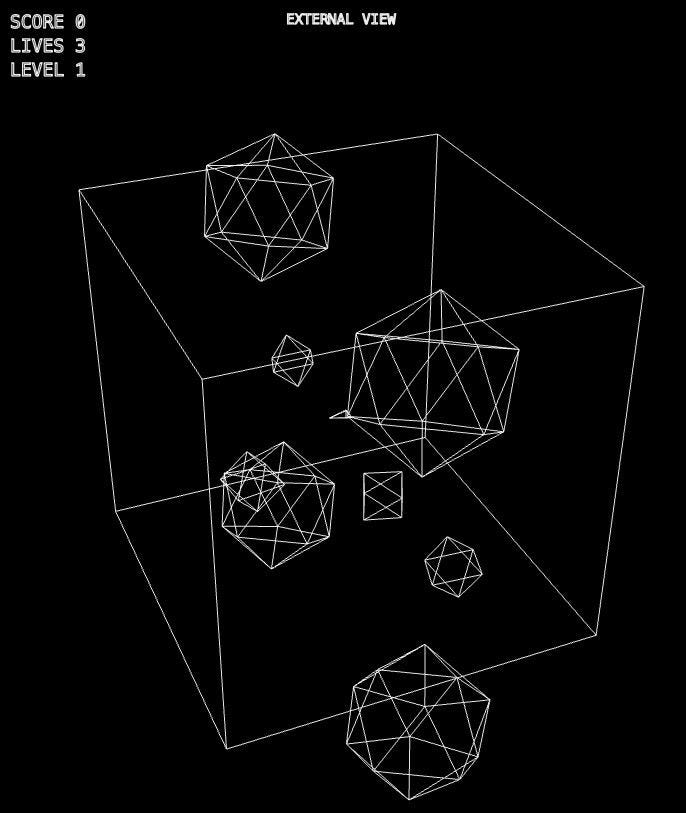

Since this post is about the negatives of AI, this week I thought I’d share the worst app I’ve made so far. The classic game “Asteroids” is played on a rectangular screen. You pilot a ship shooting at floating asteroids, and if any game asset goes off an edge of the screen, it appears on the opposite side. I thought it could be interesting to make a 3-dimensional version of this game: Now you are piloting a ship inside a cube, and if anything crosses one of the cube’s walls, it appears on the opposite wall. That turned out to be completely unplayable. If you are controlling a ship that can shoot in 3-dimensions, and you are looking at that ship from the outside, there is just no way to accurately aim. So I turned this into a split-screen experience. On the left you see the ship-in-cube from the outside, making it clear that this is a 3D version of the original game. On the right you see the scene from a first-person viewpoint. (For the topologists: on the right you are seeing the universal cover with a limited field of view, so you can see a few copies of yourself).

The resulting game still isn’t great. With a little practice it’s playable, but I’m not sure how fun it is. Then again, I was always terrible at the original game, so maybe it’s just me. Try it out for yourself and let me know what you think in the comments! Click the image above to play.

David Bachman is a professor of Mathematics, Data Science, and Computer Science. He writes about AI and its real-world impacts. To learn more about his academic work, mathematical art, or AI speaking, consulting, and curriculum development, visit davidbachmandesign.com.