The AI Ouroboros

Early indicators point to the start of recursive self-improvement

Editorial note: A few hours after I wrote this Zvi Mowshowitz, one of the most prolific AI bloggers, released a piece titled, “Welcome to Recursive Self-Improvement.” That article referenced a February 5th post from Dean Ball, another prominent thinker in the AI space, on the same topic. There’s less overlap than you might expect between my post and both Zvi’s and Dean’s, but if you’re interested in the topic discussed here, I highly recommend reading their posts!

Back in April of 2025 a team of prominent AI researchers published an essay titled, “AI 2027.” In it they warn of a dark future in which a downward spiral is triggered by a key development in AI research: “recursive self-improvement,” the idea that AI models may become powerful enough to conduct AI research on their own. If that happens they may then improve themselves, much like a snake eating its tail, leading to an exponential rise in capabilities. Regardless of whether or not you believe such an “intelligence explosion” is possible, autonomous self-improving AI research is a milestone worth paying attention to. When AI 2027 was published this idea was still mostly science fiction. With the capability jump of the last two months that I described in my last post, it is becoming reality.

Today, there are two main competitors in the AI-assisted coding space: Anthropic and OpenAI. Google (and even Elon Musk’s X.ai) are worth paying attention to, but at this moment in time it’s really a two-horse race. OpenAI is the market leader in consumer facing AI, but Anthropic has been favored among software engineers for some time. Last week, within a half hour of each other, both companies released new coding models: Anthropic with Claude Opus 4.6 and OpenAI with GPT 5.3 Codex. Everyone seems to have their favorite, but by most accounts they have comparable capability levels.

On February 7th Mike Krieger, Chief Product Officer at Anthropic, said in an interview at the CISCO AI summit:

Claude is being written by Claude. Claude products and Claude code are being entirely written by Claude.

This echoes earlier sentiments expressed by Dario Amodei, Anthropic’s CEO. In a December post titled, How AI is transforming work at Anthropic, Dario said, “AI is now writing much of the code at Anthropic … substantially accelerating the rate of our progress in building the next generation of AI systems.”

It’s important to note that autonomously writing code is not the same as autonomously performing AI research. In the same post Dario addressed this: “This feedback loop is gathering steam month by month, and may be only 1–2 years away from a point where the current generation of AI autonomously builds the next.”

The most visible recent example of recursive progress is Claude Cowork, launched on January 12. Cowork was developed as a “general agent” version of the developer-focused Claude Code, designed to handle non-coding tasks such as marketing, legal, and sales. The entire tool was built and shipped in a mere ten days. How? By having Claude write Claude Cowork.

I’ve focused mostly here on Anthropic because they’ve been the most vocal about how they are using their models to build their next models. However, it appears they are not alone. On February 3rd, OpenAI released their latest coding model, GPT 5.3 Codex. In the product announcement they said, “GPT‑5.3‑Codex is our first model that was instrumental in creating itself … our team was blown away by how much Codex was able to accelerate its own development.”

In last week’s post I wrote about the recent capability jump in AI coding models. Many people emailed me expressing equal parts alarm and excitement. I share both!! All of the AI-related concerns I’ve written about here over the last few months — the effects on schooling, employment, doomsday scenarios, etc. — are being amplified in this new development cycle. With recursive self-improvement taking off, it’s going to be even more challenging for educators and policy makers to respond in real time. Crafting AI policy has always been a moving target. That target just got a jet engine.

With all that said, there is also reason for excitement. Creating software is no longer solely the domain of a select few. As many have predicted, what we’re seeing is the democratization of software. I’m very far from a software engineer. To keep up with this technology, I’ve been following the same advice I tell others: just play with it! Last week I shared a sophisticated app I made for exploring concepts in Non-Euclidean Geometry. Since posting that I have made seven more apps!! Each one would have taken me weeks (if not months) to make on my own, even if I did have the necessary software engineering skills. Three months ago I couldn’t have made them even with AI assistance, because the models just weren’t that capable.

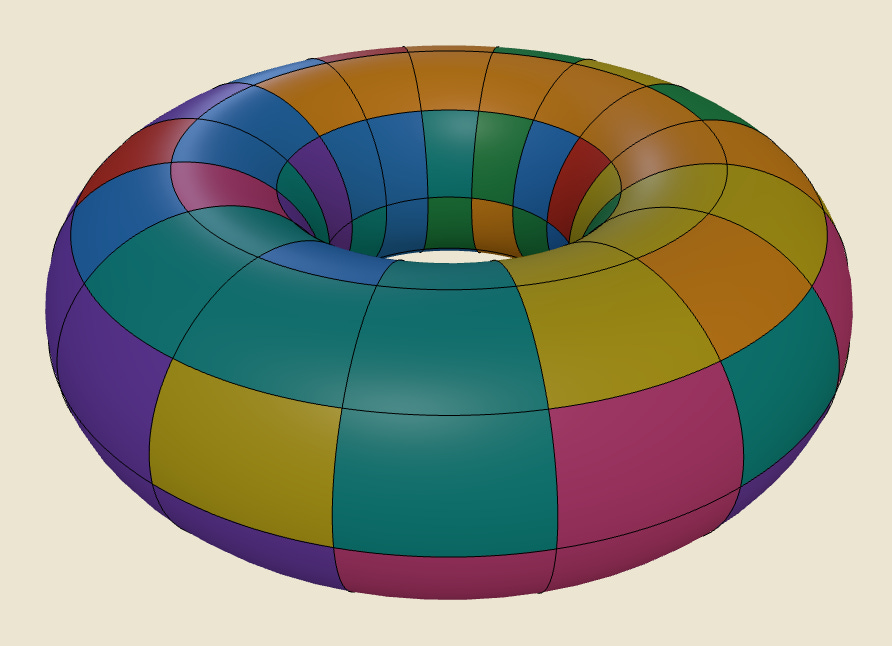

I’ll share one app that I’ve made here each week until I run out of steam. For this post, here’s a little mathematically inspired game: a Rubik’s cube-like puzzle game on a torus. It took me just a few hours, and all I did was converse with a coding agent in plain English. Play with it by clicking the image below. It works great on both desktop and mobile, but I’ve found its most enjoyable on a tablet. Hold the left mouse button or use one finger to rotate rings in either direction. Right mouse button/two fingers to rotate the whole torus.

In two weeks I’ll do a deep dive and describe how I’m making these apps. It really is just a matter of describing what you want to a coding agent, but there are some helpful tips I can share to make the process smoother. If you’ve played around with these new coding agents (Opus 4.6 or GPT 5.3 codex) and made something fun, feel free to share it here in the comments.

Next week I’ll talk about the implications of this new wave of coding agents for computer science education. I think that’s an important discussion for all educators, but if you teach computer science I’ll be particularly interested in your reactions. Feel free to email me and share your thoughts this week, and I may include them in the post.

David Bachman is a professor of Mathematics, Data Science, and Computer Science. To learn more about David’s work, visit his AI speaking and consulting site, his faculty page, or explore his mathematical art portfolio.