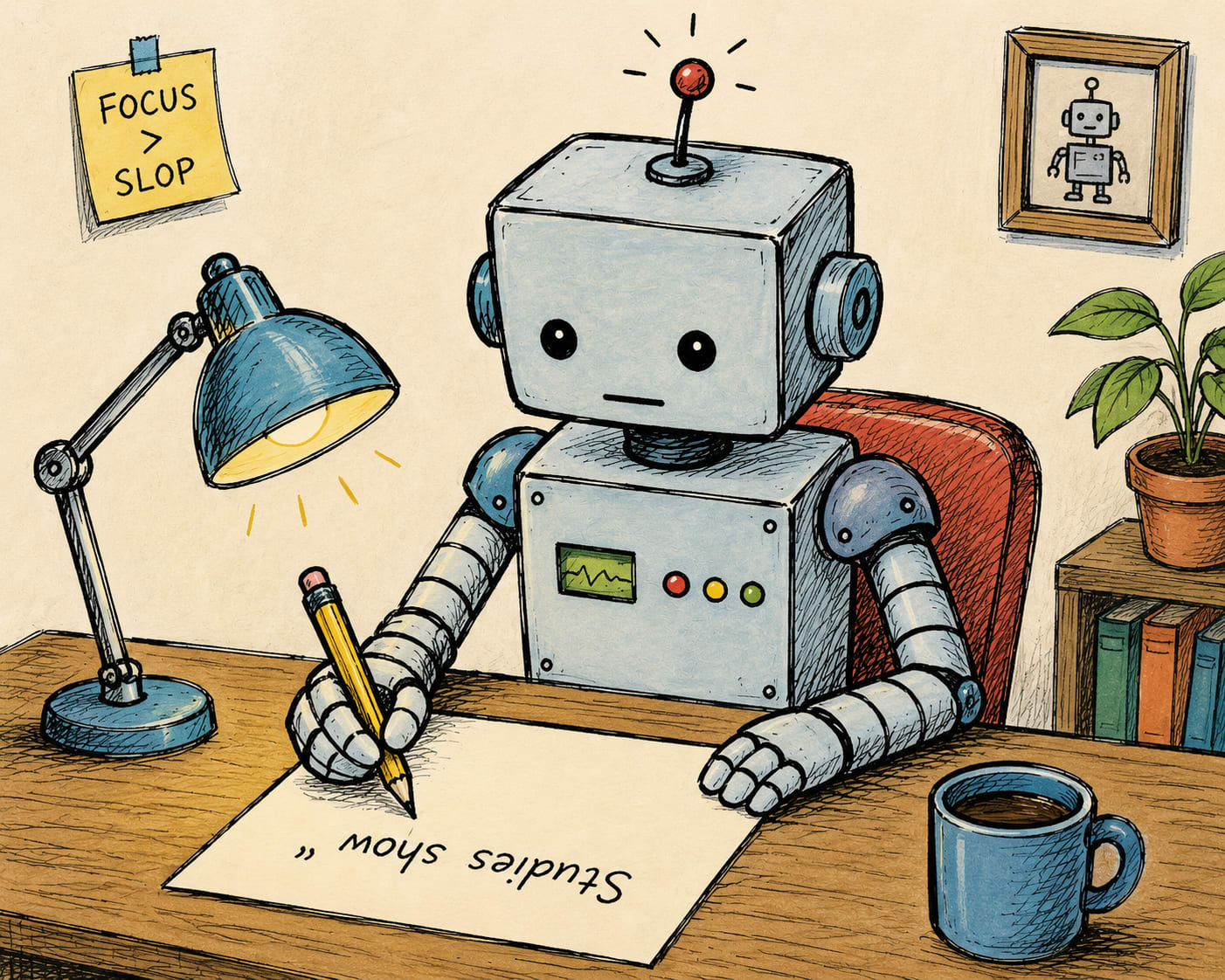

AI on AI slop

AI is ruining social media, and knows it.

Editorial Notes: This week I’m continuing with the move of my “App of the Week” section to a dedicated Thursday post. However, the AI news isn’t showing any signs of slowing, so my “AI News Bits” section is still here (scroll down!).

I’ve been trying to engage more on Substack Notes, LinkedIn, and X/Twitter, but the experience has been mostly unpleasant. These platforms are now inundated with AI slop: low-effort, low-content posts that are designed primarily for engagement. The pervasiveness of such posts makes it difficult for users to separate meaningful signal from noise. What’s fascinating (and frustrating) about this is that the AI models now seem to know what slop looks like, and yet they generate it anyway.

I’ve written several times about my biggest concerns with AI: energy, jobs, student learning, and existential risk. AI slop isn’t on the same level as those concerns, but it’s definitely a genuine problem. AI is not popular with the general American public. A recent survey showed that 50% of US adults were more concerned than excited about it, while only 10% were more excited than concerned. I suspect AI slop is responsible for a large portion of that uneasiness. Concerns about energy and jobs are big, but lurk in the background. When AI poisons the user experience on YouTube, Pinterest, and social media, it’s hitting places where many people spend hours each day for entertainment, inspiration, and connection to each other.

I know a lot of people have written about AI slop. And when there’s a lot of information out there about any topic, I think a good use of AI is to find and synthesize it. So I asked AI (specifically ChatGPT and Claude) what it knows about AI slop. At the end of this post you’ll find the resulting (human curated) AI-generated list of the “tells” of slop.

What I find interesting about the list below is how on-target it is. Most of the posts I see on social media are perfectly described here. So these models are very aware of what AI slop looks like, and yet it’s the same models that are generating it!

Here’s another sign of the slop-awareness of AI models. I asked Claude to generate the first version of this post, just to see what would happen. I didn’t use a word that it gave me, but the “thinking” it showed me before it produced a draft was fascinating. Here’s what Claude said it was going to do:

Lead with an anecdote, not a definition. Slop posts open with definitions; yours shouldn’t.

End on the moving-target argument, not a “here’s how to spot it” recap. That’s the more interesting note for an AI-focused Substack audience.

No em-dashes in the draft. Self-evident reasons. I’ll use commas, parentheses, and periods.

Again, the striking thing here is the self-awareness. You’d think that we wouldn’t be so inundated with slop since the AI models now know what slop sounds like, and how to avoid it. Clearly, that’s not happening.

This all suggests to me that with careful enough prompting, one could get an AI model to generate a social media post that avoids the tell-tale signs of slop. Surely there are savvy enough users to do this, so the problem of AI-generated social media posts may actually be much worse than we know!

I’m not the biggest fan of social media. I view it more as a necessary evil than as a desirable way to spend my time. But I do occasionally get some value out of it, and AI slop is lessening that value. My sole advice is to stay up to date on the signs of AI slop so you can quickly scroll past it to get to those posts that will have more value to you.

AI News Bits

This week Google, Microsoft, and xAI agreed to work with the US government to evaluate security vulnerabilities posed by advanced AI models. Similar agreements were already in place with Anthropic and OpenAI. So far this is all voluntary, but there is talk of the government mandating pre-release reviews, which would be a significant reversal of the Trump administration’s past AI policies.

Anthropic has experienced unprecedented growth, and as a result has struggled to secure enough compute to serve their customers. This week they entered into an agreement with Elon Musk’s SpaceX/xAI to gain use of their Colossus 1 data center, containing over 220,000 NVIDIA GPUs. Immediately after the announcement Anthropic raised usage limits for most plans.

Incremental feature releases in Claude Code and Codex continue at an increasing pace. Probably the most notable this week is Codex’s new ability to directly control Google Chrome.

OpenAI upgraded the default model for free ChatGPT users to GPT 5.5 Instant. Users should see significantly more accurate responses to factual questions (i.e. fewer hallucinations).

Signs of AI slop (courtesy of ChatGPT and Claude)

1. Lexical tells: the famous but weaker signs

Common AI-ish vocabulary includes:

delve, underscore, realm, tapestry, landscape, intricate, meticulous, commendable, robust, pivotal, seamless, cutting-edge, innovative, transformative, multifaceted, nuanced, compelling, leverage, harness, utilize, streamline, navigate, unlock, empower, showcase.

There is real evidence that some of these words surged in biomedical and academic writing after ChatGPT became widely available.

2. The corrective-pivot tic: “not X, but Y”

This is one of the most recognizable current AI/LinkedIn patterns:

It’s not about working harder. It’s about working smarter.

AI isn’t replacing humans. It’s augmenting them.

The future isn’t about tools. It’s about mindset.

This isn’t a technology problem. It’s a people problem.

3. The “LinkedIn revelation” cadence

AI-generated social posts often sound like this:

I used to think productivity was about doing more.

I was wrong.

It’s about doing what matters.

Here are 5 lessons I learned.

4. Confident vagueness

Watch for:

Studies show…

Experts agree…

Many creators are realizing…

Businesses across industries are discovering…

In recent years…

Research suggests…

5. Em dashes: real discourse, weak evidence

The em dash has become the folk detector of AI writing. But this is dangerous. Many human writers love em dashes, and journalists, essayists, academics, and bloggers have used them forever. The better point is narrower: AI often uses em dashes in a particular cadence:

This isn’t just a tool — it’s a shift in how we think.

The issue isn’t speed — it’s trust.

The answer isn’t more content — it’s better judgment.

David Bachman is a professor of Mathematics, Data Science, and Computer Science. He writes about AI and its real-world impacts. To learn more about his academic work, mathematical art, or AI speaking, consulting, and curriculum development, visit davidbachmandesign.com.